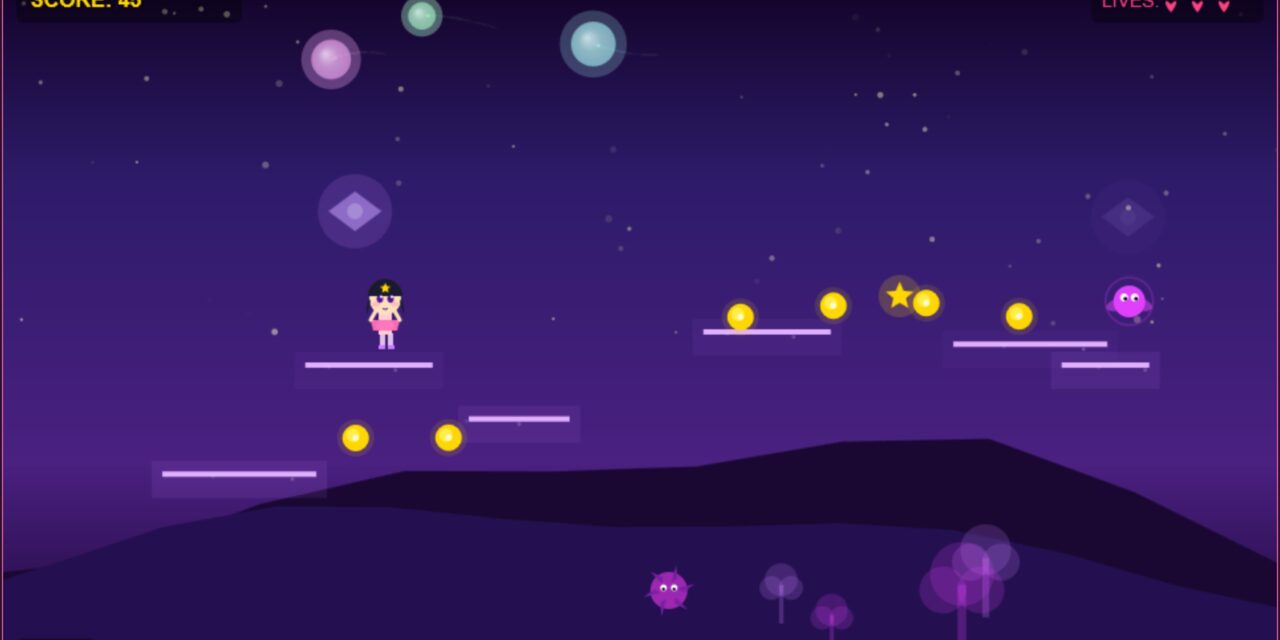

I’ve been exploring the intersection of local AI inference and creative generation, and I wanted to push it beyond the usual text and image boundaries and let the latest Qwen 3.6 model do the job. So I decided to build a browser game entirely AI-generated using Qwen 3.6 and to guide it not too much. Let the model be creative and create a game for a target ground around the age of 8 years.

Hardware & Infrastructure:

- 2x RTX A6000 GPUs for parallel inference and token generation

- Ollama as the inference server (local LLM orchestration)

- Qwen qwen3.6:35b-a3b-bf16 as the generative model

- Hermes-Agents as the Agent driving the development (very successfull)

This setup provides enough horsepower to run a large 35B parameter model efficiently while maintaining reasonable latency for interactive game development.

The Model: Qwen 3.6:35b-a3b-bf16

The Qwen 3.6 model in its 35B quantized form (bf16) struck the right balance for this project:

- Size: Large enough for coherent, creative output

- Speed: Quantized to run efficiently on dual A6000s

- Quality: Excellent instruction-following and creative generation

The Key Learning: Let the Model Breathe

Here’s what I discovered through experimentation: Don’t over-guide the model. Let it be creative.

My initial approach was heavily constrained specific rules, exact game mechanics, rigid structures. The results were… predictable and flat.

The breakthrough came when I switched to open-ended prompting:

Instead of:

“Generate a game with these exact rules: 1) Move with arrow keys 2) Collect items 3) Avoid enemies”

I tried then:

“Design a fun, engaging browser game. Think about what would make it interesting and enjoyable for a 8 year old girl. What mechanics would be surprising? What visuals would pop?”

The difference was striking. When I stepped back and let Qwen explore the design space, it generated more innovative game mechanics, unexpected twists, and creative solutions I hadn’t considered.

The lesson: Large language models are powerful ideation partners. They don’t just follow instructions they can contribute creative vision. The trick is knowing when to be prescriptive and when to be permissive.

Download:

If you like to download the small game and AGENT.md file Hermes-Agent generated then just klick the link: Jump_and_run_game

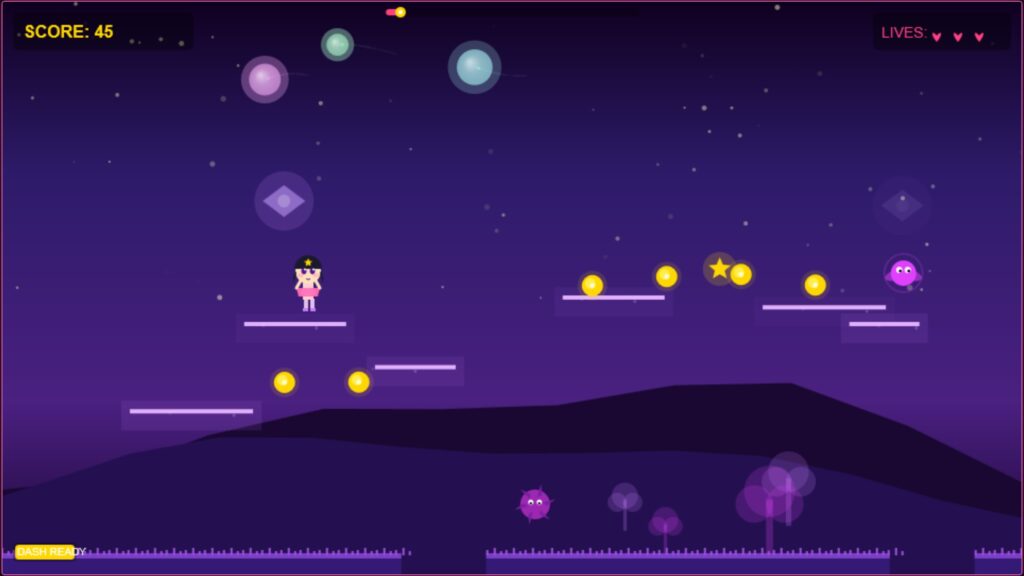

The Workflow

- Prompt Engineering (local Ollama + Qwen): High-level game concept → Qwen generates HTML and CSS / JavaScript if you ask for.

- Iteration: Review output, ask follow-up questions, refine (staying conversational, not mechanical)

- Testing: Run game directly in browser, identify issues

- Refinement Loop: Let the model fix bugs, enhance features, improve UX based on feedback

The entire process happened locally by using the Hermes-Agent no cloud API calls, no rate limits, full privacy because I am using Ollama as inferencing server.

This experiment raises some interesting questions I was thinking about after playing for a while:

- Accessibility: If 35B models can run locally on accessible hardware (dual A6000s), what becomes possible for indie developers?

- Iteration Speed: Traditional game development cycles vs. AI-assisted co-creation

- Creativity: Does guided creativity produce better results than constrained design?

- Scalability: What if tablets with integrated AI accelerators become the development platform?

The Next Frontier

The infrastructure for AI-assisted game development is already here. The models are capable. The hardware is becoming more affordable. What’s emerging is a new creative workflow where humans and AI collaborate as true partners.

This wasn’t about replacing game designers. It was about accelerating ideation and proving that local LLMs can handle complex, structured creative tasks and not just text or image generation.

The tutorial offers a clear and practical guide for setting up and running the Tensorflow Object Detection Training Suite. Could…

This works using an very old laptop with old GPU >>> print(torch.cuda.is_available()) True >>> print(torch.version.cuda) 12.6 >>> print(torch.cuda.device_count()) 1 >>>…

Hello Valentin, I will not share anything related to my work on detecting mines or UXO's. Best regards, Maker

Hello, We are a group of students at ESILV working on a project that aim to prove the availability of…